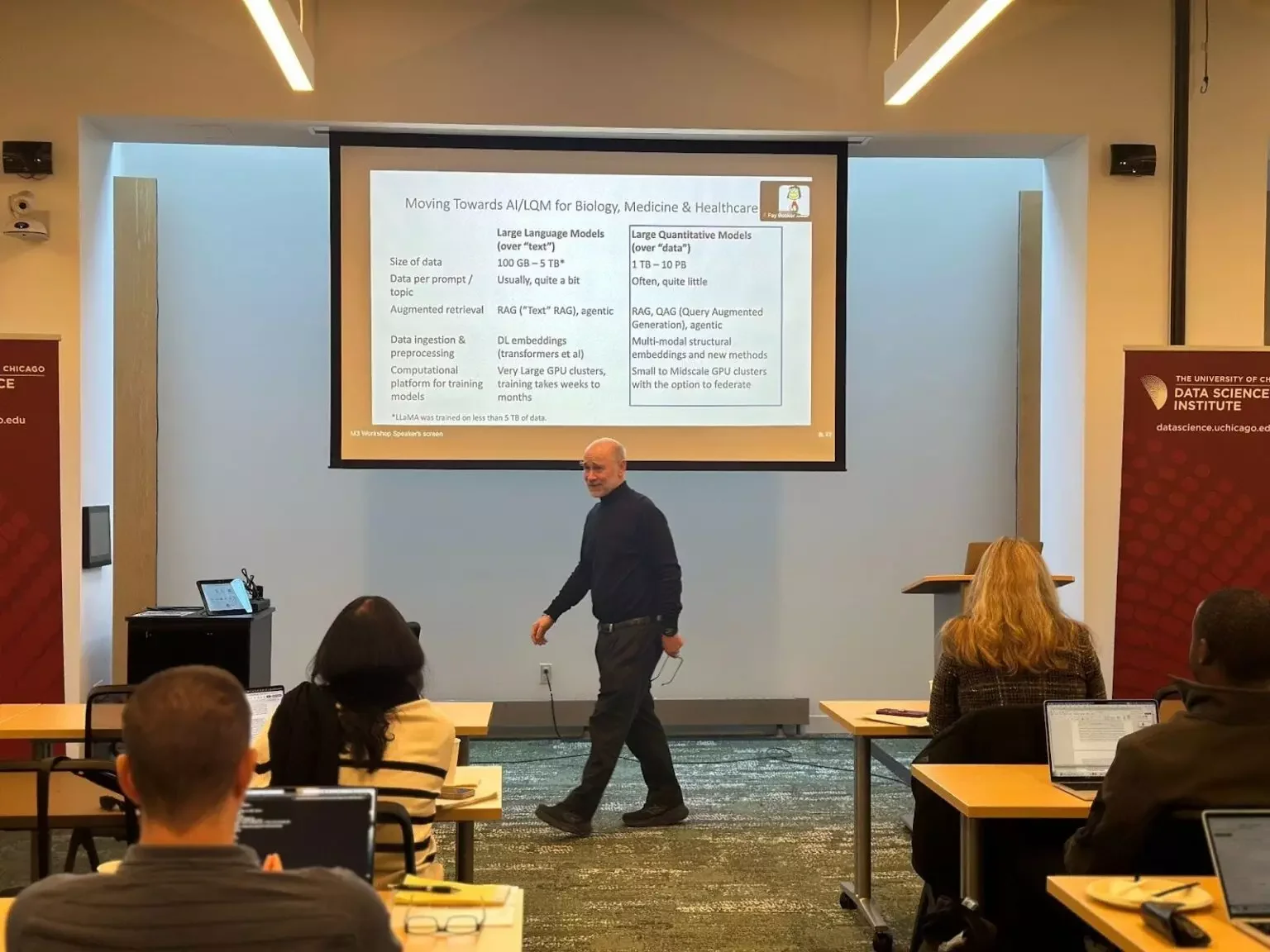

The Center for Translational Data Science (CTDS) and the Data Science Institute jointly hosted the Meshes of Midscale Models (M3) Workshop on January 28, bringing together researchers working at the frontier of AI in biomedical research.

A fundamental obstacle to using AI in biomedicine is the scarcity of high-quality data to train large language models, generative AI, and large quantitative models. The Meshes for Midscale Models (M3) Initiative takes a multidisciplinary approach to the challenge, integrating expertise in biology, medicine, computer science, machine learning, and economics. Rather than relying on massive proprietary models, it focuses on developing small and midscale AI models that can operate effectively in resource-limited environments. The approach is already showing results: earlier M3 work demonstrated that pretrained machine learning models can help diagnose skin cancers in settings where specialist dermatologists are scarce.